Mistral 7B Prompt Template

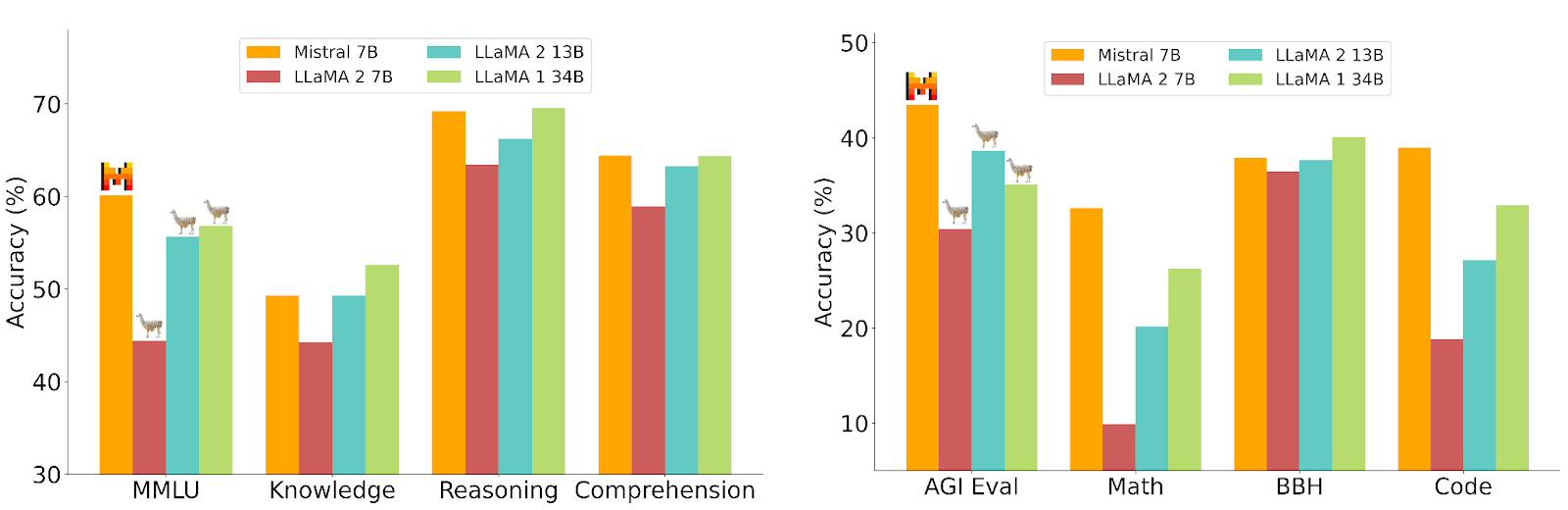

Mistral 7B Prompt Template - Let’s implement the code for inferences using the mistral 7b model in google colab. Below are detailed examples showcasing various prompting. Projects for using a private llm (llama 2). Today, we'll delve into these tokenizers, demystify any sources of debate, and explore how they work, the proper chat templates to use for each one, and their story within the community! It’s recommended to leverage tokenizer.apply_chat_template in order to prepare the tokens appropriately for the model. In this guide, we provide an overview of the mistral 7b llm and how to prompt with it. Prompt engineering for 7b llms : Learn the essentials of mistral prompt syntax with clear examples and concise explanations. We’ll utilize the free version with a single t4 gpu and load the model from hugging face. Technical insights and best practices included. Prompt engineering for 7b llms : Below are detailed examples showcasing various prompting. We’ll utilize the free version with a single t4 gpu and load the model from hugging face. Today, we'll delve into these tokenizers, demystify any sources of debate, and explore how they work, the proper chat templates to use for each one, and their story within the community! In this post, we will describe the process to get this model up and running. From transformers import autotokenizer tokenizer =. It’s recommended to leverage tokenizer.apply_chat_template in order to prepare the tokens appropriately for the model. Projects for using a private llm (llama 2). In this guide, we provide an overview of the mistral 7b llm and how to prompt with it. Learn the essentials of mistral prompt syntax with clear examples and concise explanations. Learn the essentials of mistral prompt syntax with clear examples and concise explanations. Technical insights and best practices included. Litellm supports huggingface chat templates, and will automatically check if your huggingface model has a registered chat template (e.g. Then we will cover some important details for properly prompting the model for best results. Models from the ollama library can be. Below are detailed examples showcasing various prompting. Technical insights and best practices included. It’s recommended to leverage tokenizer.apply_chat_template in order to prepare the tokens appropriately for the model. Technical insights and best practices included. You can use the following python code to check the prompt template for any model: It’s recommended to leverage tokenizer.apply_chat_template in order to prepare the tokens appropriately for the model. In this post, we will describe the process to get this model up and running. Technical insights and best practices included. From transformers import autotokenizer tokenizer =. Litellm supports huggingface chat templates, and will automatically check if your huggingface model has a registered chat template. It’s recommended to leverage tokenizer.apply_chat_template in order to prepare the tokens appropriately for the model. Explore mistral llm prompt templates for efficient and effective language model interactions. Jupyter notebooks on loading and indexing data, creating prompt templates, csv agents, and using retrieval qa chains to query the custom data. Prompt engineering for 7b llms : Then we will cover some. Technical insights and best practices included. Perfect for developers and tech enthusiasts. Today, we'll delve into these tokenizers, demystify any sources of debate, and explore how they work, the proper chat templates to use for each one, and their story within the community! Below are detailed examples showcasing various prompting. In this post, we will describe the process to get. Projects for using a private llm (llama 2). Explore mistral llm prompt templates for efficient and effective language model interactions. From transformers import autotokenizer tokenizer =. Models from the ollama library can be customized with a prompt. In this post, we will describe the process to get this model up and running. Prompt engineering for 7b llms : Let’s implement the code for inferences using the mistral 7b model in google colab. In this guide, we provide an overview of the mistral 7b llm and how to prompt with it. You can use the following python code to check the prompt template for any model: Explore mistral llm prompt templates for efficient. Litellm supports huggingface chat templates, and will automatically check if your huggingface model has a registered chat template (e.g. Explore mistral llm prompt templates for efficient and effective language model interactions. Then we will cover some important details for properly prompting the model for best results. Learn the essentials of mistral prompt syntax with clear examples and concise explanations. Models. To evaluate the ability of the model to avoid. It also includes tips, applications, limitations, papers, and additional reading materials related to. You can use the following python code to check the prompt template for any model: Prompt engineering for 7b llms : Then we will cover some important details for properly prompting the model for best results. Perfect for developers and tech enthusiasts. In this post, we will describe the process to get this model up and running. You can use the following python code to check the prompt template for any model: Technical insights and best practices included. Let’s implement the code for inferences using the mistral 7b model in google colab. Explore mistral llm prompt templates for efficient and effective language model interactions. Technical insights and best practices included. Prompt engineering for 7b llms : Learn the essentials of mistral prompt syntax with clear examples and concise explanations. Below are detailed examples showcasing various prompting. Today, we'll delve into these tokenizers, demystify any sources of debate, and explore how they work, the proper chat templates to use for each one, and their story within the community! It also includes tips, applications, limitations, papers, and additional reading materials related to. It’s recommended to leverage tokenizer.apply_chat_template in order to prepare the tokens appropriately for the model. Perfect for developers and tech enthusiasts. Projects for using a private llm (llama 2). In this post, we will describe the process to get this model up and running. To evaluate the ability of the model to avoid. Jupyter notebooks on loading and indexing data, creating prompt templates, csv agents, and using retrieval qa chains to query the custom data. Models from the ollama library can be customized with a prompt. In this guide, we provide an overview of the mistral 7b llm and how to prompt with it. From transformers import autotokenizer tokenizer =.Mistral 7B Best Open Source LLM So Far

mistralai/Mistral7BInstructv0.2 · system prompt template

System prompt handling in chat templates for Mistral7binstruct

Mistral 7B LLM Prompt Engineering Guide

rreit/mistral7BInstructprompt at main

mistralai/Mistral7BInstructv0.1 · Prompt template for question answering

Getting Started with Mistral7bInstructv0.1

An Introduction to Mistral7B Future Skills Academy

Mistral 7B better than Llama 2? Getting started, Prompt template

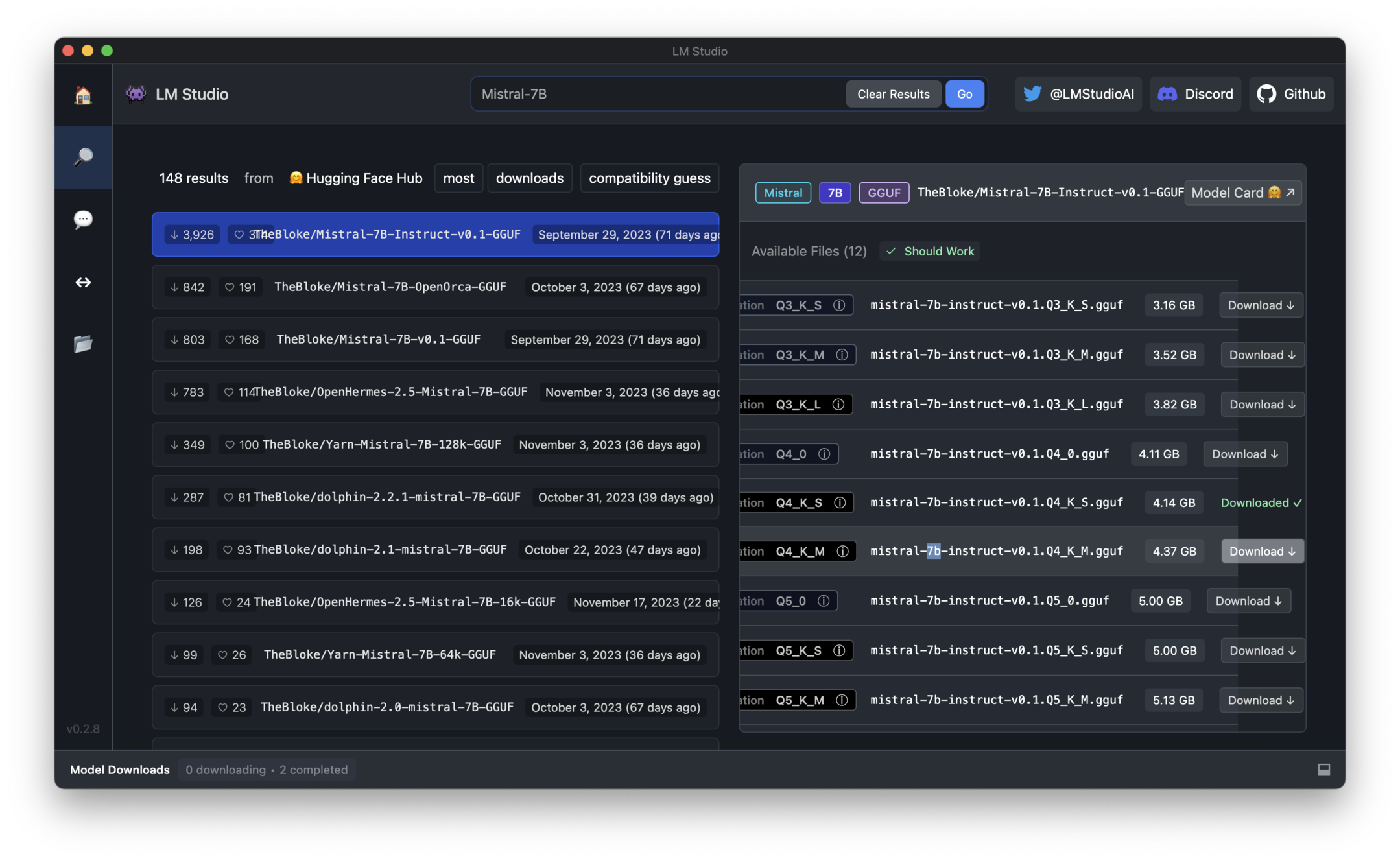

Mistral 7B Instruct Model library

Explore Mistral Llm Prompt Templates For Efficient And Effective Language Model Interactions.

Then We Will Cover Some Important Details For Properly Prompting The Model For Best Results.

Let’s Implement The Code For Inferences Using The Mistral 7B Model In Google Colab.

Technical Insights And Best Practices Included.

Related Post: