Tokenizer Apply Chat Template

Tokenizer Apply Chat Template - For step 1, the tokenizer comes with a handy function called. The add_generation_prompt argument is used to add a generation prompt,. As this field begins to be implemented into. If you have any chat models, you should set their tokenizer.chat_template attribute and test it using apply_chat_template(), then push the updated tokenizer to the hub. A chat template, being part of the tokenizer, specifies how to convert conversations, represented as lists of messages, into a single tokenizable string in the format. Before feeding the assistant answer. By storing this information with the. Some models which are supported (at the time of writing) include:. For information about writing templates and. The end of sequence can be filtered out by checking if the last token is tokenizer.eos_token{_id} (e.g. By storing this information with the. You can use that model and tokenizer in conversationpipeline, or you can call tokenizer.apply_chat_template() to format chats for inference or training. 如果您有任何聊天模型,您应该设置它们的tokenizer.chat_template属性,并使用[~pretrainedtokenizer.apply_chat_template]测试, 然后将更新后的 tokenizer 推送到 hub。. The apply_chat_template() function is used to convert the messages into a format that the model can understand. 这个错误明确指出,在新版本中 tokenizer 不再包含默认的聊天模板,需要我们显式指定模板或设置 tokenizer.chat_template。 问题的根源在于 transformers 库源码中对 chat. For information about writing templates and. This notebook demonstrated how to apply chat templates to different models, smollm2. Yes tools/function calling for apply_chat_template is supported for a few selected models. If you have any chat models, you should set their tokenizer.chat_template attribute and test it using apply_chat_template(), then push the updated tokenizer to the hub. For step 1, the tokenizer comes with a handy function called. The apply_chat_template() function is used to convert the messages into a format that the model can understand. This notebook demonstrated how to apply chat templates to different models, smollm2. Tokenize the text, and encode the tokens (convert them into integers). We’re on a journey to advance and democratize artificial intelligence through open source and open science. You can use that. If a model does not have a chat template set, but there is a default template for its model class, the conversationalpipeline class and methods like apply_chat_template will use the class. If you have any chat models, you should set their tokenizer.chat_template attribute and test it using apply_chat_template(), then push the updated tokenizer to the hub. You can use that. As this field begins to be implemented into. Yes tools/function calling for apply_chat_template is supported for a few selected models. Tokenize the text, and encode the tokens (convert them into integers). A chat template, being part of the tokenizer, specifies how to convert conversations, represented as lists of messages, into a single tokenizable string in the format. 如果您有任何聊天模型,您应该设置它们的tokenizer.chat_template属性,并使用[~pretrainedtokenizer.apply_chat_template]测试, 然后将更新后的 tokenizer. If you have any chat models, you should set their tokenizer.chat_template attribute and test it using apply_chat_template(), then push the updated tokenizer to the hub. Cannot use apply_chat_template() because tokenizer.chat_template is not set and no template argument was passed! By structuring interactions with chat templates, we can ensure that ai models provide consistent. Before feeding the assistant answer. Among other. Our goal with chat templates is that tokenizers should handle chat formatting just as easily as they handle tokenization. 如果您有任何聊天模型,您应该设置它们的tokenizer.chat_template属性,并使用[~pretrainedtokenizer.apply_chat_template]测试, 然后将更新后的 tokenizer 推送到 hub。. The apply_chat_template() function is used to convert the messages into a format that the model can understand. You can use that model and tokenizer in conversationpipeline, or you can call tokenizer.apply_chat_template() to format chats for inference. What special tokens are you afraid of? As this field begins to be implemented into. 如果您有任何聊天模型,您应该设置它们的tokenizer.chat_template属性,并使用[~pretrainedtokenizer.apply_chat_template]测试, 然后将更新后的 tokenizer 推送到 hub。. Cannot use apply_chat_template() because tokenizer.chat_template is not set and no template argument was passed! Chat templates are strings containing a jinja template that specifies how to format a conversation for a given model into a single tokenizable sequence. For information about writing templates and. Cannot use apply_chat_template() because tokenizer.chat_template is not set and no template argument was passed! 这个错误明确指出,在新版本中 tokenizer 不再包含默认的聊天模板,需要我们显式指定模板或设置 tokenizer.chat_template。 问题的根源在于 transformers 库源码中对 chat. Chat templates are strings containing a jinja template that specifies how to format a conversation for a given model into a single tokenizable sequence. For step 1, the tokenizer comes with a. Some models which are supported (at the time of writing) include:. For step 1, the tokenizer comes with a handy function called. 如果您有任何聊天模型,您应该设置它们的tokenizer.chat_template属性,并使用[~pretrainedtokenizer.apply_chat_template]测试, 然后将更新后的 tokenizer 推送到 hub。. A chat template, being part of the tokenizer, specifies how to convert conversations, represented as lists of messages, into a single tokenizable string in the format. Chat templates are strings containing a jinja. Tokenize the text, and encode the tokens (convert them into integers). Among other things, model tokenizers now optionally contain the key chat_template in the tokenizer_config.json file. 这个错误明确指出,在新版本中 tokenizer 不再包含默认的聊天模板,需要我们显式指定模板或设置 tokenizer.chat_template。 问题的根源在于 transformers 库源码中对 chat. Before feeding the assistant answer. That means you can just load a tokenizer, and use the new. For information about writing templates and. If you have any chat models, you should set their tokenizer.chat_template attribute and test it using [~pretrainedtokenizer.apply_chat_template], then push the updated tokenizer to the hub. For step 1, the tokenizer comes with a handy function called. By structuring interactions with chat templates, we can ensure that ai models provide consistent. A chat template, being. For step 1, the tokenizer comes with a handy function called. What special tokens are you afraid of? As this field begins to be implemented into. The apply_chat_template() function is used to convert the messages into a format that the model can understand. If you have any chat models, you should set their tokenizer.chat_template attribute and test it using apply_chat_template(), then push the updated tokenizer to the hub. 如果您有任何聊天模型,您应该设置它们的tokenizer.chat_template属性,并使用[~pretrainedtokenizer.apply_chat_template]测试, 然后将更新后的 tokenizer 推送到 hub。. The add_generation_prompt argument is used to add a generation prompt,. 这个错误明确指出,在新版本中 tokenizer 不再包含默认的聊天模板,需要我们显式指定模板或设置 tokenizer.chat_template。 问题的根源在于 transformers 库源码中对 chat. You can use that model and tokenizer in conversationpipeline, or you can call tokenizer.apply_chat_template() to format chats for inference or training. Our goal with chat templates is that tokenizers should handle chat formatting just as easily as they handle tokenization. The end of sequence can be filtered out by checking if the last token is tokenizer.eos_token{_id} (e.g. If you have any chat models, you should set their tokenizer.chat_template attribute and test it using [~pretrainedtokenizer.apply_chat_template], then push the updated tokenizer to the hub. By structuring interactions with chat templates, we can ensure that ai models provide consistent. For information about writing templates and. A chat template, being part of the tokenizer, specifies how to convert conversations, represented as lists of messages, into a single tokenizable string in the format. You can use that model and tokenizer in conversationpipeline, or you can call tokenizer.apply_chat_template() to format chats for inference or training.apply_chat_template() with tokenize=False returns incorrect string

THUDM/chatglm36b · 增加對tokenizer.chat_template的支援

· Add "chat_template" to tokenizer_config.json

microsoft/Phi3mini4kinstruct · tokenizer.apply_chat_template

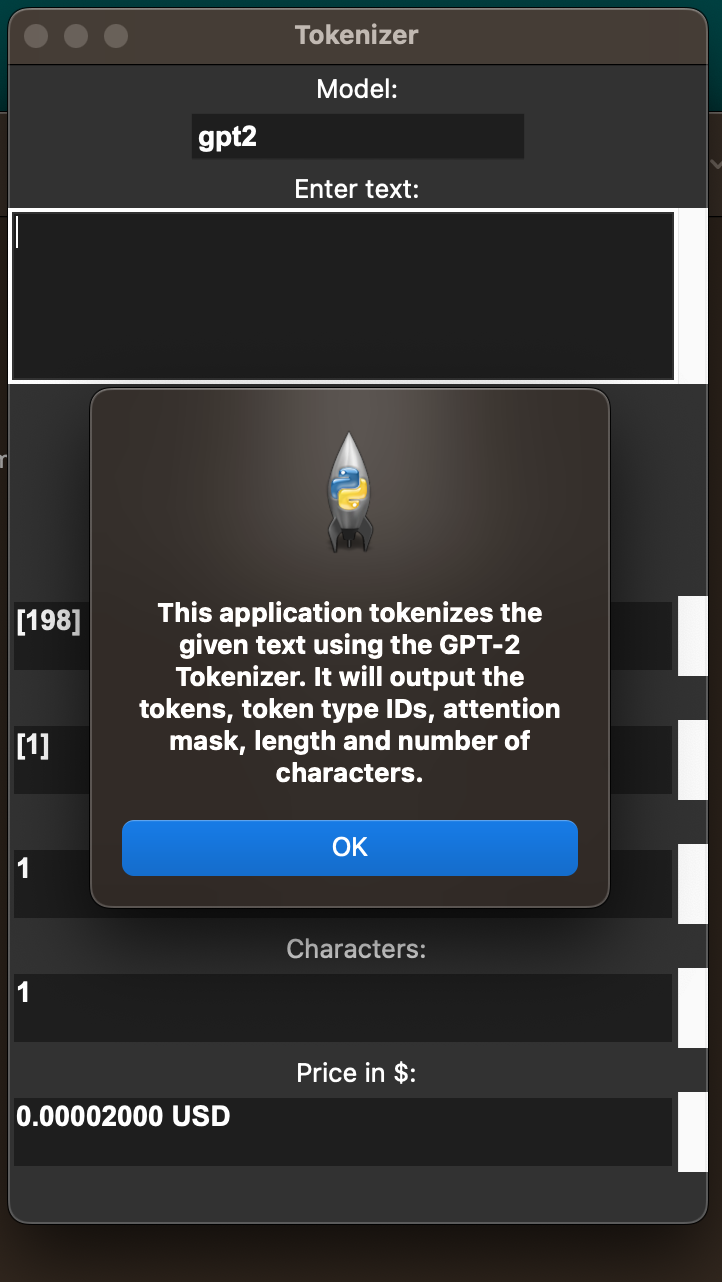

Chatgpt 3 Tokenizer

Using add_generation_prompt with tokenizer.apply_chat_template does not

mkshing/opttokenizerwithchattemplate · Hugging Face

`tokenizer.apply_chat_template` not working as expected for Mistral7B

· Hugging Face

feat Use `tokenizer.apply_chat_template` in HuggingFace Invocation

Yes Tools/Function Calling For Apply_Chat_Template Is Supported For A Few Selected Models.

Some Models Which Are Supported (At The Time Of Writing) Include:.

If A Model Does Not Have A Chat Template Set, But There Is A Default Template For Its Model Class, The Conversationalpipeline Class And Methods Like Apply_Chat_Template Will Use The Class.

Before Feeding The Assistant Answer.

Related Post: